[tiny experiment] Create a Transformer from scratch

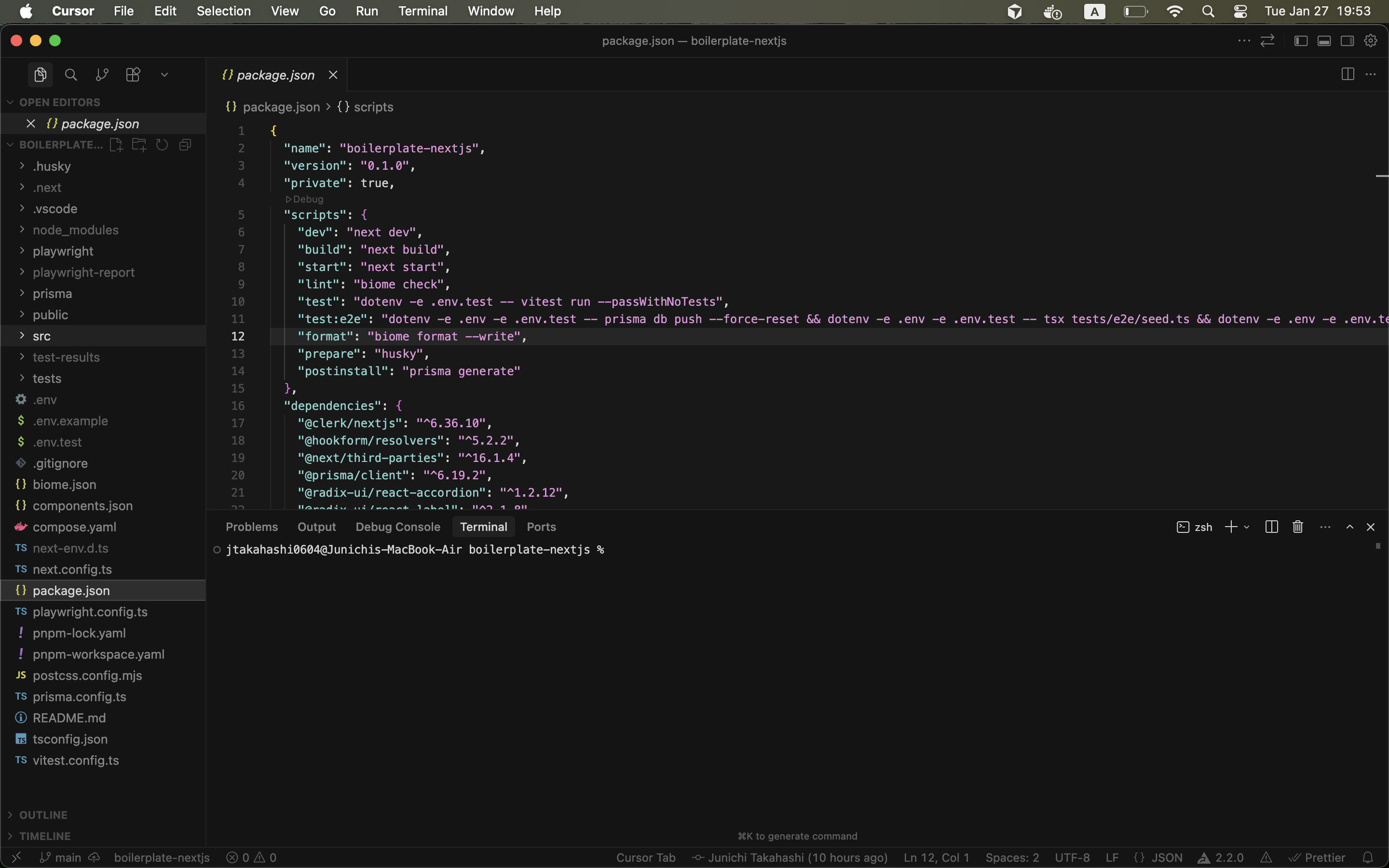

https://github.com/jtakahashi0604/tiny-experiment-nn-transformer

Daily Recap - 2026-01-27 - Day 3

📚 Text:

"Let's build GPT" by @karpathy

✏️ Note:

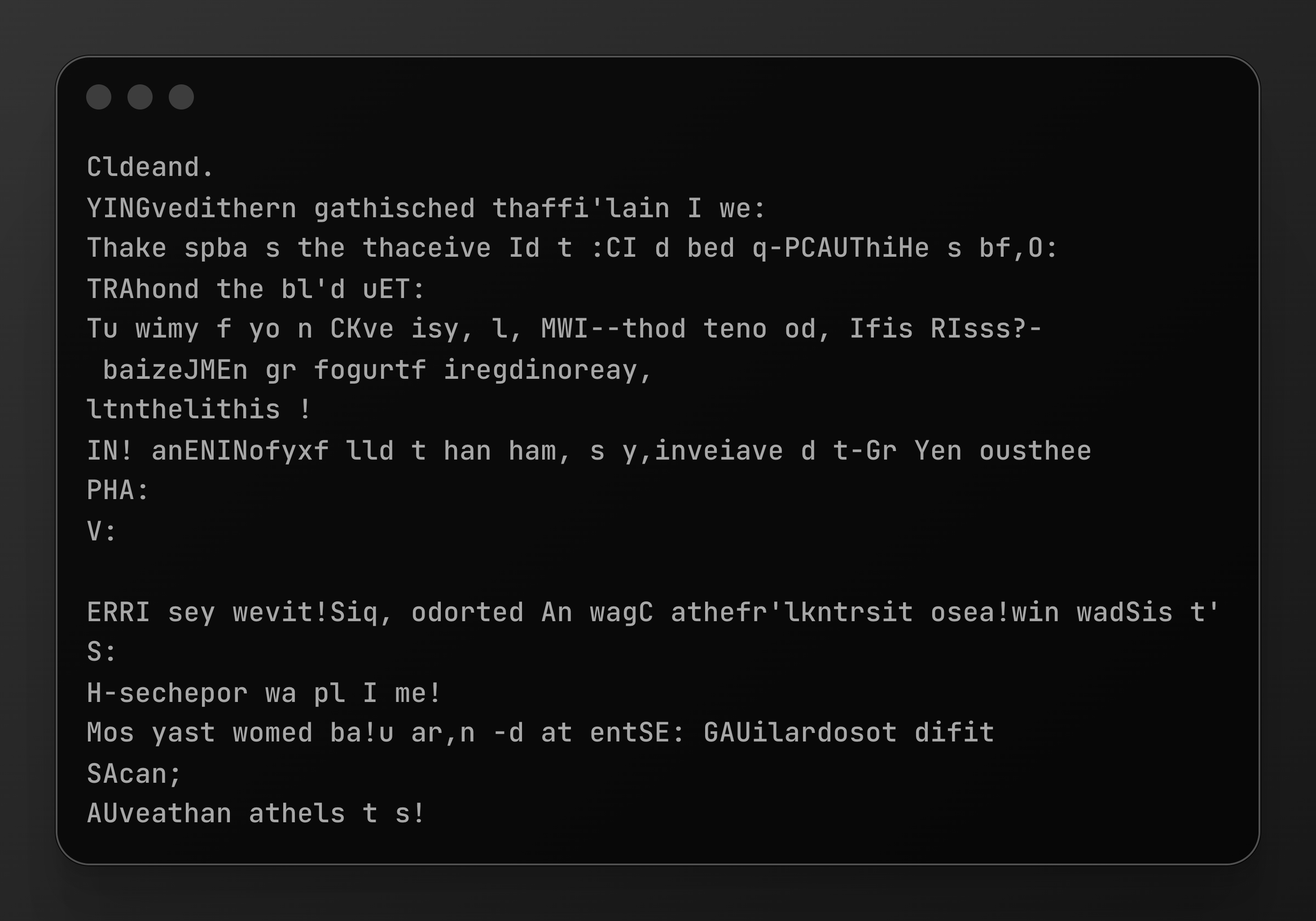

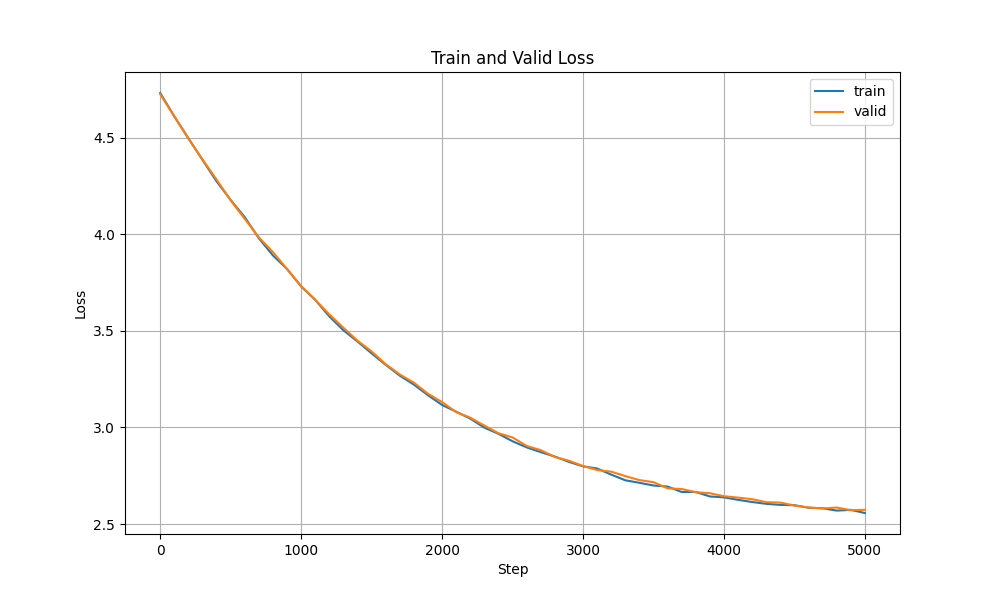

Finally got the Transformer algorithm running, It’s alive 🤖

Huge thanks to @karpathy for the awesome tutorial.

Daily Recap - 2026-01-23 - Day 2

📚 Text:

"Let's build GPT" by @karpathy

✏️ Note:

Today, I learned about lower triangular matrices.

I’m amazed they can replace a for loop.

Daily Recap - 2026-01-21 - Day 1

📚 Text:

"Let's build GPT" by @karpathy

✏️ Note:

Just started diving into the Transformer architecture.

Today, I implemented a BigramLanguageModel - a simple model predicting the next character from the curr character.

It's very simple, but seeing it output somewhat "plausible" text is a great start.